We’ve known since 2008 that the acceleration of dividends can be used to better explain how stock prices behave, but we’ve never really explored whether they can also tell us about the relative health of the U.S. economy.

Until now! Hypothetically, we should see some sort of correlation between changes in the rate of dividend growth for large, publicly-traded firms and the growth rate of real GDP in the U.S., where in times of decelerating dividend growth (or negative acceleration), we should see low GDP growth rates, and in times of positively accelerating dividend growth, we should see higher levels of real GDP.

Alternatively, the null hypothesis would be that there’s not a significant or strong enough correlation between the two for that kind of exercise to be worthwhile.

Today, we’re going to do a quick, first pass analysis using a limited set of data to see how worthwhile it might be to explore the hypothesis further. We’ll use our real GDP temperature chart for data going back to the first quarter of 2000 to divide the annualized one-quarter inflation-adjusted GDP growth rates that the BEA reports each quarter into “cold”, “cool”, “moderate”, “warm” and “hot” levels of real GDP growth.

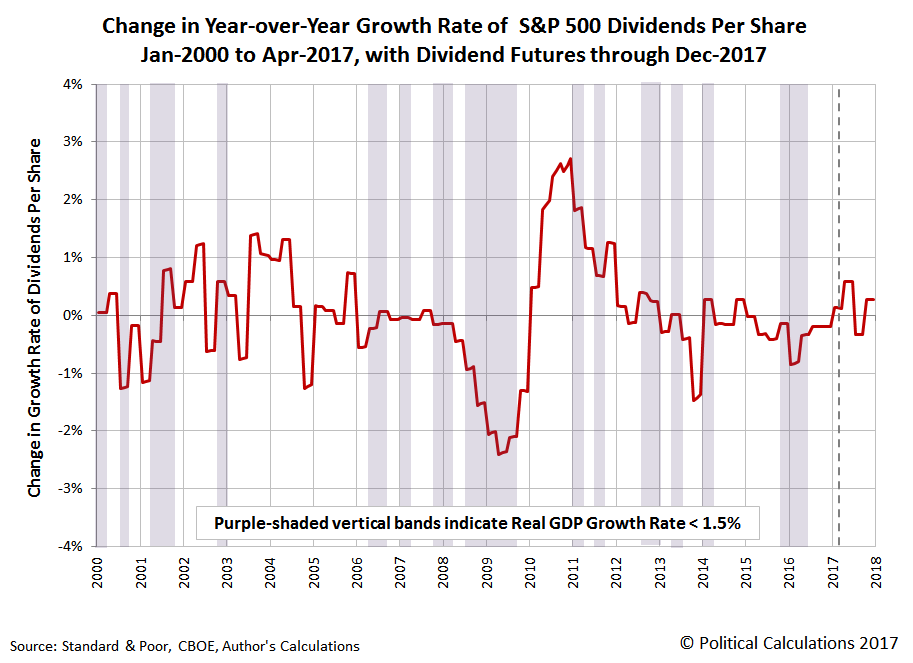

In our next chart showing the change in the year over year growth rate of the S&P 500’s trailing year dividends per share, we’ve indicated the periods where real GDP growth dropped into the “cold” range, where real economic growth in the U.S. was less than or equal to 1.5%. This visual presentation should quickly tell us if there’s any kind of basic correlation.

Looking over this second chart, we have 14 periods of one longer or more where the real GDP growth rate dropped into the cold zone. We also see 14 periods where the change in the rate of growth of the S&P 500’s trailing year dividends per share dropped into negative territory.

However, we see that the two sets of negative occurrences don’t align particularly well, where we have some periods with negative dividend growth acceleration without corresponding cold GDP levels, and vice versa. This visual correlation check tells us that there’s not necessarily a particularly strong correlation between the two sets of data that might be worth evaluating further, where the next step is to note our results so that others can take them into account in shaping their own work if they’re engaged in similar analysis.

Sure, we could follow the example of a shockingly high percentage of medical and psychological studies fail to be replicated because the authors might have p-hacked their way to statistically significant outcomes that they could publish. We however find it more useful to publish the path that led to the apparent dead end, because while not likely to ever be picked up by any journal, we can at least save others a lot of time by identifying what either doesn’t work or what approaches don’t appear offer much in the way of promise for either practical application or for future work.

That’s the very real economic value of publishing results that fail to uphold the main hypothesis (or rather, that fail to reject the null hypothesis). It’s nowhere near as glamorous as selectively publishing the kind of results that might get trumpeted throughout the media, but often, it’s much more valuable.